Robert Betts Laughlin (born November 1, 1950) is a professor of Physics and Applied Physics at Stanford University who, together with Horst L. Störmer and Daniel C. Tsui, was awarded the 1998 Nobel Prize in physics for his explanation of the fractional quantum Hall effect.

Robert Betts Laughlin (born November 1, 1950) is a professor of Physics and Applied Physics at Stanford University who, together with Horst L. Störmer and Daniel C. Tsui, was awarded the 1998 Nobel Prize in physics for his explanation of the fractional quantum Hall effect.

Laughlin was born in Visalia, California. He earned a B.A. in Physics from UC Berkeley in 1972, and his Ph.D. in physics in 1979 at MIT, Cambridge, Massachusetts, USA. In the period of 2004-2006 he served as the president of KAIST in Daejeon, South Korea.

Laughlin shares similar views to George Chapline on the existence of black holes. See: Robert B. Laughlin

The Emergent Age, by Robert Laughlin

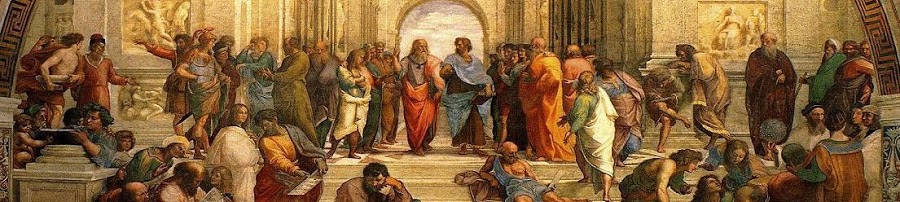

The natural world is regulated both by fundamental laws and by powerful principles of organization that flow out of them which are also transcendent, in that they would continue to hold even if the fundamentals were changed slightly. This is, of course, an ancient idea, but one that has now been experimentally demonstrated by the stupendously accurate reproducibility of certain measurements – in extreme cases parts in a trillion. This accuracy, which cannot be deduced from underlying microscopics, proves that matter acting collectively can generate physical law spontaneously.

Physicists have always argued about which kind of law is more important – fundamental or emergent – but they should stop. The evidence is mounting that ALL physical law is emergent, notably and especially behavior associated with the quantum mechanics of the vacuum. This observation has profound implications for those of us concerned about the future of science. We live not at the end of discovery but at the end of Reductionism, a time in which the false ideology of the human mastery of all things through microscopics is being swept away by events and reason. This is not to say that microscopic law is wrong or has no purpose, but only that it is rendered irrelevant in many circumstances by its children and its children’s children, the higher organizational laws of the world.

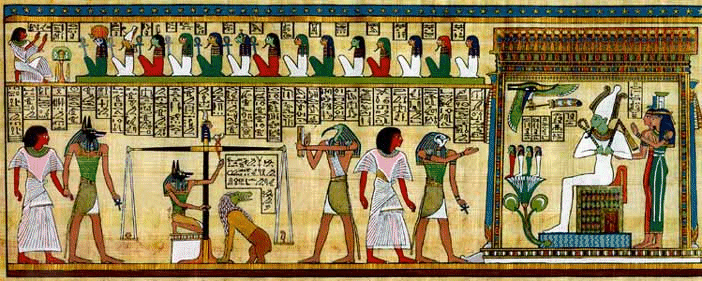

In general usage, complexity tends to be used to characterize something with many parts in intricate arrangement. The study of these complex linkages is the main goal of network theory and network science. In science there are at this time a number of approaches to characterizing complexity, many of which are reflected in this article. Definitions are often tied to the concept of a ‘system’ – a set of parts or elements which have relationships among them differentiated from relationships with other elements outside the relational regime. Many definitions tend to postulate or assume that complexity expresses a condition of numerous elements in a system and numerous forms of relationships among the elements. At the same time, what is complex and what is simple is relative and changes with time.

Some definitions key on the question of the probability of encountering a given condition of a system once characteristics of the system are specified. Warren Weaver has posited that the complexity of a particular system is the degree of difficulty in predicting the properties of the system if the properties of the system’s parts are given. In Weaver’s view, complexity comes in two forms: disorganized complexity, and organized complexity. [1] Weaver’s paper has influenced contemporary thinking about complexity. [2]

The approaches which embody concepts of systems, multiple elements, multiple relational regimes, and state spaces might be summarized as implying that complexity arises from the number of distinguishable relational regimes (and their associated state spaces) in a defined system.

Some definitions relate to the algorithmic basis for the expression of a complex phenomenon or model or mathematical expression, as is later set out herein.

Was Given a link to this Complexity Map above that I find very interesting. It is a interactive Map so I suggest visiting the link provided.

This comment has been removed by a blog administrator.