….. a Frankenstein?:)

Seriously , there are defined differences in the human being versus AI Intelligence. I think people have a tendency to blurr the lines on machinery. This of course required some reading and wiki quotes herein help to orientate.

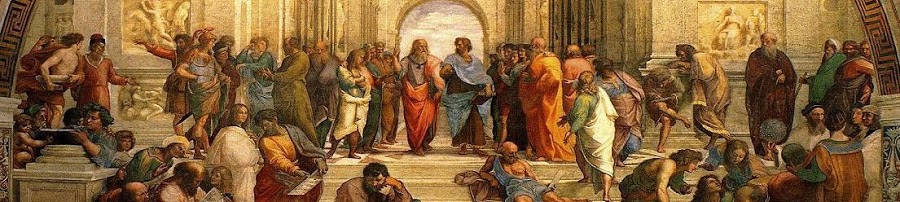

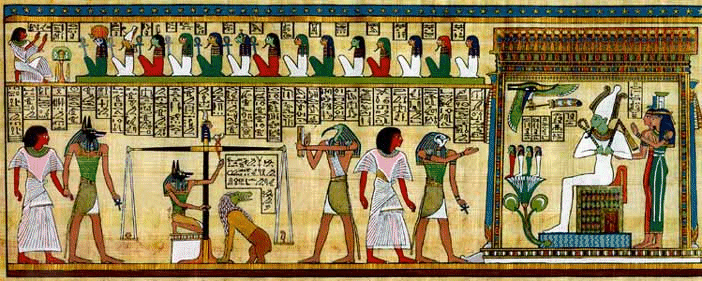

Of course the pictures in fiction development are closely related to the approach to development, while in some respects it represents to be more the development of the perfect human being

It seems there is a quest “to develop” human beings, not just robots.

Artificial Intelligence (AI) is the intelligence of machines and the branch of computer scienceintelligent agents,”[1] where an intelligent agent is a system that perceives its environment and takes actions which maximize its chances of success.[2] John McCarthy, who coined the term in 1956,[3][4] which aims to create it. Textbooks define the field as “the study and design of defines it as “the science and engineering of making intelligent machines.”

The field was founded on the claim that a central property of humans, intelligence—the sapience of Homo sapiens—can be so precisely described that it can be simulated by a machine.[5] This raises philosophical issues about the nature of the mind and limits of scientific hubris, issues which have been addressed by myth, fiction and philosophy since antiquity.[6] Artificial intelligence has been the subject of breathtaking optimism,[7] has suffered stunning setbacks[8][9] and, today, has become an essential part of the technology industry, providing the heavy lifting for many of the most difficult problems in computer science.

AI research is highly technical and specialized, deeply divided into subfields that often fail to communicate with each other.[10] Subfields have grown up around particular institutions, the work of individual researchers, the solution of specific problems, longstanding differences of opinion about how AI should be done and the application of widely differing tools. The central problems of AI include such traits as reasoning, knowledge, planning, learning, communication, perception and the ability to move and manipulate objects.[11] General intelligence (or “strong AI“) is still a long-term goal of (some) research.[12]

Lacking a heart…..

Knowledge representation

Main articles: Knowledge representation and Commonsense knowledgeKnowledge representation[43] and knowledge engineering[44] are central to AI research. Many of the problems machines are expected to solve will require extensive knowledge about the world. Among the things that AI needs to represent are: objects, properties, categories and relations between objects;[45] situations, events, states and time;[46] causes and effects;[47][48] and many other, less well researched domains. A complete representation of “what exists” is an ontology[49] (borrowing a word from traditional philosophy), of which the most general are called upper ontologies. knowledge about knowledge (what we know about what other people know);

Among the most difficult problems in knowledge representation are:

- Default reasoning and the qualification problem

- Many of the things people know take the form of “working assumptions.” For example, if a bird comes up in conversation, people typically picture an animal that is fist sized, sings, and flies. None of these things are true about all birds. John McCarthy identified this problem in 1969[50] as the qualification problem: for any commonsense rule that AI researchers care to represent, there tend to be a huge number of exceptions. Almost nothing is simply true or false in the way that abstract logic requires. AI research has explored a number of solutions to this problem.[51]

- The breadth of commonsense knowledge

- The number of atomic facts that the average person knows is astronomical. Research projects that attempt to build a complete knowledge base of commonsense knowledgeCyc) require enormous amounts of laborious ontological engineering — they must be built, by hand, one complicated concept at a time.[52] A major goal is to have the computer understand enough concepts to be able to learn by reading from sources like the internet, and thus be able to add to its own ontology. (e.g.,

- The subsymbolic form of some commonsense knowledge

- Much of what people know is not represented as “facts” or “statements” that they could actually say out loud. For example, a chess master will avoid a particular chess position because it “feels too exposed”[53] or an art critic can take one look at a statue and instantly realize that it is a fake.[54] These are intuitions or tendencies that are represented in the brain non-consciously and sub-symbolically.[55] Knowledge like this informs, supports and provides a context for symbolic, conscious knowledge. As with the related problem of sub-symbolic reasoning, it is hoped that situated AI or computational intelligence will provide ways to represent this kind of knowledge.[55]

….they wanted to embed robotic feature with emotive functions…

Social intelligence

Main article: Affective computing

Kismet, a robot with rudimentary social skills

Emotion and social skills[73] play two roles for an intelligent agent. First, it must be able to predict the actions of others, by understanding their motives and emotional states. (This involves elements of game theory, decision theory, as well as the ability to model human emotions and the perceptual skills to detect emotions.) Also, for good human-computer interaction, an intelligent machine also needs to display emotions. At the very least it must appear polite and sensitive to the humans it interacts with. At best, it should have normal emotions itself.

….finally, having the ability to dream:)

Integrating the approaches

- Intelligent agent paradigm

- An intelligent agent is a system that perceives its environment and takes actions which maximizes its chances of success. The simplest intelligent agents are programs that solve specific problems. The most complicated intelligent agents are rational, thinking humans.[92] The paradigm gives researchers license to study isolated problems and find solutions that are both verifiable and useful, without agreeing on one single approach. An agent that solves a specific problem can use any approach that works — some agents are symbolic and logical, some are sub-symbolic neural networks and others may use new approaches. The paradigm also gives researchers a common language to communicate with other fields—such as decision theory and economics—that also use concepts of abstract agents. The intelligent agent paradigm became widely accepted during the 1990s.[93]

-

Researchers have designed systems to build intelligent systems out of interacting intelligent agents in a multi-agent system.[94] A system with both symbolic and sub-symbolic components is a hybrid intelligent system, and the study of such systems is artificial intelligence systems integration. A hierarchical control system provides a bridge between sub-symbolic AI at its lowest, reactive levels and traditional symbolic AI at its highest levels, where relaxed time constraints permit planning and world modelling.[95] Rodney Brooks‘ subsumption architecture was an early proposal for such a hierarchical system.

So to me there is an understanding that needs to remain consistent in our views as one moves forward here to see that what is create is not really the human being that we are, but a manifestation of. I think people tend to “loose perspective” on human intelligence versus A.I. So that the issue then is to note these differences? This distinction to me rests in “what outcomes are possible in the diversity of human population matched to a purpose for personal development toward an ideal.” No match can be found in terms of this creative attachment which can arise distinctive to each person’s in probable outcome. The difference here is that “if” all knowledge already existed, and “if” we were to have access to this “collective unconscious per say,” then how it is that such thinking cannot point toward new paradigms for personal development that are developed in society? New science? AI Intelligence already has all these knowledge factors inclusive, so it can give outcomes according to a “quantum leap??”:) No, it needs human intervention, or AI can already give us that new science? You see? There would be “no need” for an Einstein?